Back

Work AI

What is Digital Change Management: Definition, Strategy, Frameworks & ROI

Tushar Dublish

Millions of dollars are flowing into new software. AI platforms. Cloud migrations. Automation tools. Digital workflows. And in most of those organizations, adoption stalls around 40%. Employees circle back to old habits. Managers quietly tolerate workarounds. Expensive tools sit half-used.

The technology works. The people don't follow.

There is a second layer to this problem that most organizations miss. Even when the technology is objectively better, it still demands behavioral change. And behavior does not change just because software exists.

This is where a new category of tools is emerging — systems designed not just to deliver functionality, but to reduce the amount of change required from the user. Instead of forcing employees to adapt to the tool, the tool adapts to existing workflows.

And this is not a technology problem. It is a change problem. And it has a name: the absence of digital change management.

These are not failure stories about bad software choices. They are failure stories about organizations that treated a human problem as a technical one.

This guide explains what digital change management actually is, why it keeps getting underestimated, and what the organizations that do it well actually do differently. Whether you are rolling out an enterprise AI assistant, migrating to a new CRM, or standardizing workflows across departments, the same principles apply.

What Digital Change Management Is (And What It Is Not)

Start by getting the definition right. It matters.

Digital change management is the structured discipline of guiding people through shifts in how they work with technology. It focuses not just on introducing new tools, but on reshaping habits, decision-making patterns, and day-to-day behaviors so that the technology actually becomes part of how work gets done.

It covers everything on the human side of a digital initiative: leadership alignment, stakeholder communication, training design, resistance management, feedback systems, and behavioral reinforcement. All working together to turn deployment into sustained, measurable adoption.

Some modern internal knowledge search tools are now being designed with this principle built in. ActionSync, for example, does not treat change management as an afterthought layered on top of the product. It reduces the need for change itself by embedding intelligence directly into existing workflows, lowering the activation energy required from users.

In practice, this means designing the entire experience of change. What employees hear before launch, what they try on day one, what support they receive in week two, and what signals they observe from leadership over the next 90 days. It is the difference between a system being available and a system being relied upon. Without this discipline, even the best technology remains optional in the minds of users, and optional tools rarely become essential workflows.

The most effective modern systems are starting to embed this principle into their design. Instead of introducing entirely new environments, they layer intelligence on top of tools employees already use. Thereby, reducing friction, shortening learning curves, and accelerating adoption by default.

This shift is important. Because the less change you ask from people, the more likely they are to actually change.

The word "digital" distinguishes it from classical organizational change management, which was designed for slower, more structural or cultural shifts such as reorganizations or policy changes. Digital change management deals specifically with technology transitions, where the pace is faster, the rollouts are more frequent, and the impact on daily workflows is immediate and continuous. Employees are not just adapting once. They are adapting repeatedly, often across multiple tools at the same time.

Because of this, digital change management requires a different level of precision and consistency. It must operate in shorter cycles, respond quickly to feedback, and integrate directly into the flow of work rather than sitting alongside it. It is less about managing a single transformation and more about building an organizational capability to absorb ongoing change without losing productivity, trust, or momentum.

Four Things Digital Change Management Is Not

Not a project plan. A project plan tracks milestones, timelines, and budgets. Change management tracks people. You can be perfectly on schedule with zero actual adoption. Many organizations are.

Not a training program. Training is one element of change, not even the most important one. Prosci research found that 38% of AI adoption challenges stem from insufficient training. The other 62% come from factors training alone cannot fix: lack of trust, unclear purpose, poor leadership signals, and cultural resistance.

Not a launch announcement. Sending a company-wide email is announcing change. Managing change happens in the months before and after that email.

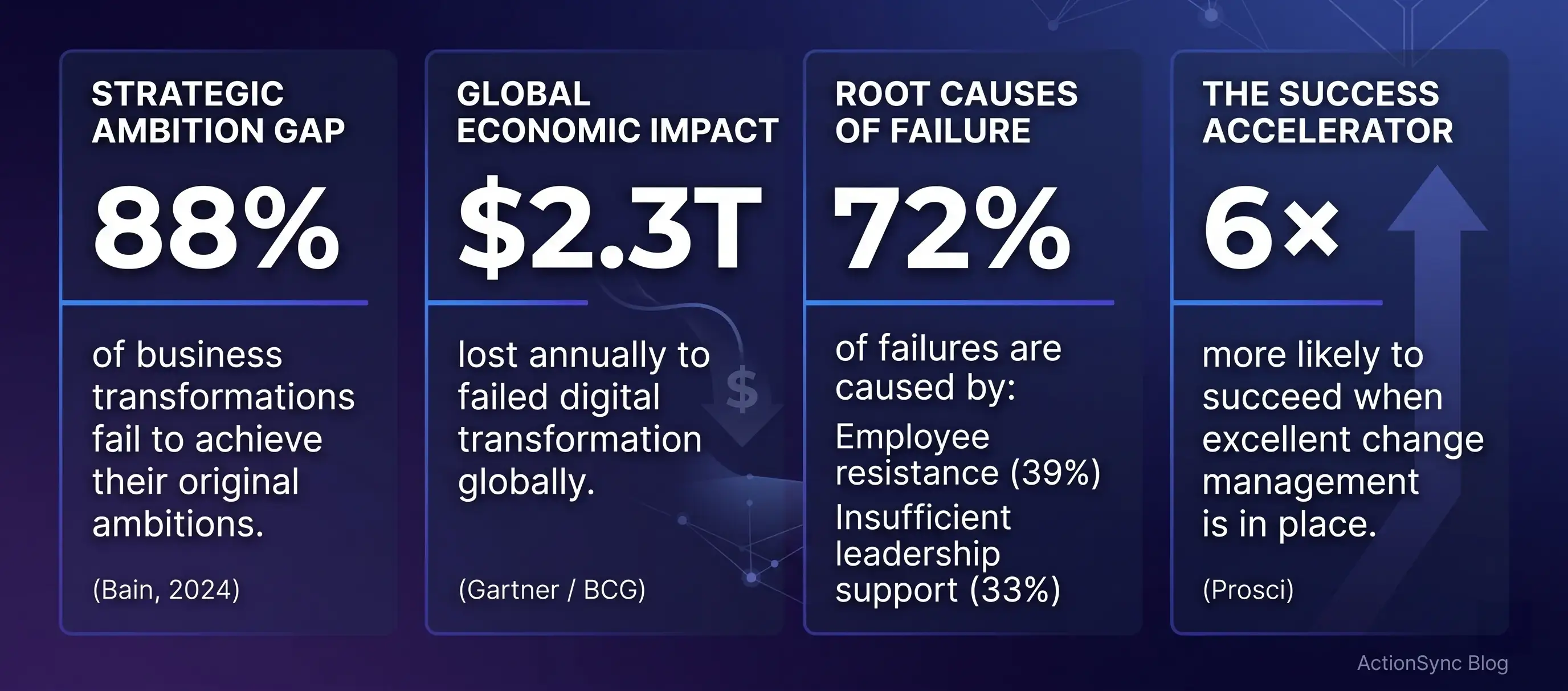

Not optional. Change management is often treated as a nice-to-have. It's the first thing cut when budgets tighten. Prosci's research shows that projects with excellent change management are 6 times more likely to meet their objectives than those with poor or no change management. The cost of skipping it almost always exceeds the cost of doing it.

Why Transformation Keeps Failing: The Real Root Causes

If the same organizations keep failing at change initiatives despite knowing the statistics, there has to be a reason. There is. Several, actually.

Reason #1: The technology gets the budget. The people do not.

A typical enterprise will spend 12 months selecting software, negotiating contracts, completing integration work, and setting up infrastructure. The change management budget (if one exists) is a fraction of the technology spend, often tacked on in the final weeks before launch.

Technology gets 90 cents of every dollar. Change management gets the remaining dime. The result is a technically capable system that nobody uses the way it was intended.

Reason #2: People resist loss, not change

This is the most underestimated insight in all of change management, and it has significant practical implications.

Employees are not resistant to change as a personality trait. Humans adopt new things constantly: new phones, new routes to work, new social apps. What people resist is loss. When technology changes, people sense what they stand to lose:

Competence: the feeling of mastery they had with the old system

Social status: the informal authority that came from knowing more than others

Autonomy: the freedom to work their own way

Job security: especially acute with AI tools

A senior analyst who has spent five years mastering your legacy BI tool is not obstructing progress. She is watching a system that made her valuable being replaced by a platform where she starts over as a beginner. That is a rational response to a real loss. It will not be resolved by a training video.

Pro Tip: Before your next rollout, add a "loss inventory" to your stakeholder map. For each group, ask: what are they losing? What competencies, routines, or informal power? Then design communication that acknowledges that loss before selling the benefits of what replaces it.

Reason #3: Change fatigue is silently killing your initiatives

Most organizations running major transformations are running multiple ones simultaneously. A new ERP. A new collaboration platform. A new AI assistant. A new performance review system. Each arrives with its own announcement, its own training, its own asks on employee attention.

A 2025 analysis found that the average employee attends 16 change-related meetings per week — a 20% increase from just two years prior. When that capacity is exceeded, something gives. It is almost always the newest initiative.

Common Mistake: Change fatigue is not laziness. It is cognitive overload. If your teams seem disengaged in your latest rollout, the issue may not be the tool, it may be the accumulation of everything that came before it.

This is why “adding another tool” is often the wrong answer, even if the tool is powerful. Every new system competes for attention.

The better approach is consolidation (or augmentation). Instead of introducing one more destination, leading organizations are adopting systems that sit across existing tools and unify workflows without increasing cognitive load.

Enterprise assistant platforms like Action Sync follow this augmentation model. It does not replace your existing stack. It connects it. That distinction matters because replacing systems demands high behavioral change, while augmenting them allows adoption to happen incrementally, within familiar environments.

Reason #4: Middle managers are the missing variable

Organizations invest heavily in executive sponsorship and frontline training. The layer in between (middle managers) is consistently undertreated. This is a strategic error. Middle managers are the primary transmission mechanism for change. This is the point where strategy turns into day-to-day behavior.

They translate executive intent into operational reality. They decide what gets discussed in team meetings, what gets prioritized under pressure, and what quietly gets ignored. If they are unclear, unconvinced, or unsupported, the change fractures at the exact layer responsible for scaling it.

A manager who uses the new platform in meetings sends a signal. A manager who asks for reports from the new system reinforces that signal. A manager who never mentions it (or continues to accept outputs from the old system) also sends a signal. Employees calibrate their behavior based on these cues far more than on official communications.

A 2024 CEO survey found that 58% of managers felt disempowered to escalate issues during change initiatives. When managers feel they cannot question timelines, flag usability gaps, or request additional support, they compensate by shielding their teams. That often means allowing workarounds or quietly deprioritizing the new system.

Effective change management treats middle managers as a distinct audience. They need early visibility into the change, not last-minute briefings. They need dedicated training that goes beyond features and focuses on how to lead their teams through the transition. They need clear expectations from leadership and permission to push back when something is not working.

You cannot fix frontline adoption without fixing manager alignment first. In most organizations, the fastest way to accelerate adoption is not more training for employees. It is a deeper enablement for the managers who shape their behavior every day.

The Architecture of Effective Digital Change Management

The organizations that navigate digital change successfully share a set of structural practices that most others skip, rush, or underinvest in.

1. Start with a Change Readiness Assessment

Before you plan a rollout, assess the soil you are planting in. A change readiness assessment examines three things:

The organization's history with change — was the last major initiative a success or a cautionary tale?

The current change load — how many active initiatives are employees already absorbing?

Cultural appetite — is there inherent skepticism about this type of change, or genuine openness?

This assessment shapes everything downstream. It tells you how much runway you need before launch, surfaces pockets of resistance, and identifies where leadership alignment is fragile.

Pro Tip: Run a pulse survey two to three months before launch. Ask three questions:

Do you understand why this change is happening?

Do you feel prepared?

Do you trust leadership will support you through it?

The gaps in those responses tell you exactly where to focus first.

2. Build a Case for Change Around People, Not Projects

Every organization creates a business case for its technology investments. Very few create a case for change, and these two are documents for entirely different audiences.

A business case is written for approvers. It speaks the language of ROI, NPV, and strategic alignment.

A case for change is written for the people who will live with the decision every day. It answers:

Why is this happening, and why now?

What is wrong with the current way we work?

What specifically will improve for me once we change?

What is in it for me — not the company, me?

Who do I talk to when I need help?

Real Example: A mid-sized SaaS company rolling out an AI-powered knowledge platform initially communicated: "This will improve information retrieval and break knowledge silos." 30-day adoption was under 20%.

They rewrote the case around a single insight from employee surveys: the average employee spent 45 minutes per day searching for information.

New message: "This tool gets those 45 minutes back." Active usage climbed to 58% in the next 30 days. Same tool. Same launch. Different story. Completely different outcome.

3. Stakeholder and Loss Mapping

Standard stakeholder mapping identifies groups, their level of impact, and their current stance. This is necessary but not sufficient.

What most maps miss is the loss inventory: for each group, what specifically are they losing? The customer success manager who built her own account-tracking system in spreadsheets is not just getting a new CRM. She is losing a system she spent two years perfecting. That loss needs to be acknowledged before the new tool can be embraced.

Pro Tip: Segment stakeholders not just by department, but by impact type. A high-impact, high-resistance segment needs a completely different intervention than a high-impact, high-readiness one. Do not run the same playbook across groups that are starting from different places.

4. Design a Communication Cadence, Not a Campaign

Most change communication is structured like a product launch: a burst of energy at announcement, then silence. Effective change communication is sustained, two-directional, and responsive.

Research shows that 29% of employees say changes are not communicated clearly. And this confusion, not resistance, becomes the primary driver of non-adoption. People do not adopt what they do not understand.

Phase | Timing | Goal | Key Actions |

Awareness | 2–3 months before | Create readiness | Explain the why, set expectations, acknowledge disruption honestly |

Preparation | 3–4 weeks before | Build various types of knowledge | Role-specific previews, Q&A sessions, introduce support resources |

Activation | Launch week | Enable first steps | Clear first actions, celebrate early adopters, low-friction help |

Reinforcement | Month 1–6 post-launch | Sustain adoption | Share wins, respond to friction publicly, visible leadership use |

Pro Tip: Use the channels where employees already live. If your team is in Slack all day, change communication goes in Slack. And not on a separate intranet page no one visits. Meeting people in existing workflows reduces friction and increases reach.

5. Restructure Training as Contextual, Role-Specific, and Just-In-Time

The default approach to training has not evolved much: schedule sessions, gather employees, walk through features, send the recording, mark the box. This has a documented problem, the forgetting curve.

People forget approximately 50% of new information within an hour, 70% within 24 hours, and 90% within a week. Unless the information is immediately applied in context. Training employees on a system they will not touch for another three weeks is a scheduled forgetting exercise.

Training Principle | What It Means | What to Avoid |

Role-specific | Cover only the 5–10 actions each role actually needs | Generic "everyone" sessions that cover everything shallowly |

Just-in-time | Deliver at the moment of need, not weeks in advance | Scheduling training before the tool is even accessible |

Applied, not passive | Sandbox environments, live scenarios, peer practice | Video-only training with no application opportunity |

Continuous | Refresh as the tool evolves; AI skills half-life is 3–4 months | One-time onboarding treated as complete change management |

Real Example: A 400-person technology company creating role-specific "quick start" cards. One page per role, covering only the five actions each team needed on day one. This way, they reduced training time by 60% and achieved 3x higher first-week adoption compared to their previous general two-hour session.

6. Build Your Change Champion Network Before Launch

Change champions (aka early adopters who advocate genuinely and provide peer-level support) are the single most cost-effective investment in adoption. They are not HR staff. They are the informal leaders inside each department: the person others go to when they have a problem, whose opinion carries weight in the hallway.

The champion network serves three functions no official communication plan replicates:

Peer credibility: A colleague saying "this made my job easier" lands differently than an executive saying it.

Real-time support: Champions absorb informal questions that would otherwise get answered with "just use the old way."

Feedback collection: Champions hear what is actually happening on the ground. This is where people struggle, where early wins are appearing.

McKinsey research on AI adoption specifically found that millennial managers (ages 35–44) are among the most enthusiastic early adopters: 62% report high AI expertise, versus 50% of Gen Z employees. This demographic is your most powerful champion pool if engaged deliberately.

Pro Tip: Identify champion candidates two to three months before launch. Brief them before the announcement reaches the broader organization. Give them early tool access. Make them feel like they are shaping the rollout, because they are.

7. Create a Formal Feedback Loop and Use It Visibly

The most effective signal an organization can send during a change initiative is that employee input changes something.

When employees see that a problem they raised got addressed, they invest in the process. When feedback goes into a void, they disengage, and stop adopting.

A formal feedback loop needs:

A defined collection cadence like weekly pulse surveys, bi-weekly focus groups, and open channels

A defined person responsible for synthesizing feedback

A defined response process, even if the response is 'we heard this and here is why we are not changing it'

The visible response matters as much as the action. Employees do not expect perfection. They expect to be heard.

The ADKAR Model Applied: A Diagnostic, Not Just a Framework

The Prosci ADKAR model is one of the most actionable frameworks for managing digital change at the individual level. Most people use it as a planning checklist. Its real power is as a diagnostic tool.

Stage | The Question It Answers | What Stalling Here Looks Like | The Fix |

Awareness | Does each person understand why the change is happening? | People cannot explain the reason for the change | More communication about purpose, not more training |

Desire | Do they want to participate? | They understand but do not care or actively avoid | Address individual benefits; build trust; involve them |

Knowledge | Do they know how to change? | They want to but do not know how | Role-specific training; job aids; peer support |

Ability | Can they do it in real work conditions? | They passed training but cannot execute under pressure | Practice in realistic conditions; coaching; reduced load |

Reinforcement | Is the change being sustained? | Early adoption drops off after 30–60 days | Recognition; accountability; visible leadership use |

Most organizations only invest in Knowledge and Ability. They provide training and maybe a sandbox environment. But if someone does not understand why they are changing (Awareness) and does not want to change (Desire), no amount of training fixes the problem. You are teaching someone who has already decided to ignore what you teach.

If adoption is stalling at 90 days, run a quick ADKAR diagnosis. Survey a sample of low-adoption users:

Can you describe why this system was introduced?

Did you feel involved in the decision?

Do you feel confident using it?

Do you feel recognized when you use it well?

Each answer points to a specific stage and a specific intervention.

Digital Change Management in the Age of AI: What Is Different Now?

Most change management principles apply consistently across technology transitions. But AI tools introduce dimensions that traditional frameworks were not built to address.

The trust problem is categorically different

When organizations roll out a new CRM or project management tool, employees may dislike it. But they do not distrust it. AI tools carry a different kind of skepticism. Employees are uncertain whether outputs are reliable, when to trust the system, and when to override it.

This requires a different kind of preparation. Employees need to understand, at a conceptual level, how the AI tool works. Not technically, but well enough to develop calibrated trust.

What is it good at?

When should I verify before acting?

What happens when it is wrong?

Common Mistake: One bad AI output, shared by one early adopter with three colleagues, can collapse adoption in an entire team. Without a foundation of calibrated trust built before launch, you are one visible mistake away from a credibility crisis.

Replacement anxiety is real and cannot be managed with vague reassurance

A Prosci study of 1,107 professionals found that 63% of organizations cite human factors as the primary challenge in AI implementation. Most of those human factors are rooted in a question employees are too cautious to ask out loud: is this tool here to help me or replace me?

If the organization's public message is "this AI will make you more productive" but the underlying strategy involves headcount reduction, employees will sense the gap. No communication strategy bridges that credibility deficit. Leaders need to be explicit (not vague) about AI's role in the workforce strategy.

Employees are already using AI informally

McKinsey research from 2025 found that employees are using AI tools at work three times more than their leaders realize. The issue is not the refusal to use AI. It is that employees are using consumer AI tools unofficially, and the organization has no visibility, no governance, and no way to ensure quality or security.

Effective AI change management accounts for this. It does not pretend employees are starting from zero. It acknowledges informal AI use, learns from what they have already discovered, and redirects that energy into the officially supported platform.

Pro Tip: Before launching your enterprise AI platform, informally survey employees about which AI tools they already use at work. The answers will reshape your communication strategy, training design, and expectations for where enthusiasm (and resistance) will be strongest.

The 48% rule: what actually drives AI adoption

McKinsey's 2025 workforce research found that 48% of US employees say they would use AI tools more often if they received formal training. And 45% say they would use them more if the tools were integrated directly into their daily workflows. Not in a separate application they have to remember to open.

The most practical design principle for AI change management: the tool needs to come to the work, not the other way around. AI assistants embedded in Slack, email, or your CRM get used. AI platforms that require a deliberate context switch to a separate interface get forgotten.

Integration is not just a technical decision. It is a change management decision.

This is precisely where ActionSync AI operates. By embedding AI capabilities directly into existing workflows, it removes the need for context switching. Instead of asking employees to adopt AI, it allows them to encounter AI in the course of their normal work, which dramatically increases usage.

Measuring Digital Change: What to Track and When

Change management without measurement is hope dressed as strategy. Here is a practical framework for tracking what actually matters, and when.

Week 1–2 Post-Launch: Baseline Activation

Metric | What It Tells You | Warning Threshold |

% of users who logged in | Whether the announcement created action | Below 60% is a red flag |

Average session length | Whether people explored or just clicked once | Under 3 minutes suggests confusion |

Support ticket volume | Whether onboarding created clarity or chaos | Spike signals training gaps |

Manager check-in completion | Whether the bridge layer is activated | Below 80% means the bridge is broken |

Days 30–45: Adoption Velocity

Metric | What It Tells You | Warning Threshold |

Monthly Active Users (MAU) | Real adoption, not just activation | Below 40% requires immediate intervention |

Feature utilization rate | Whether core value is being delivered | Surface-only use = shelfware in progress |

Workaround prevalence | Whether the tool competes with old habits | Any formal workarounds signal a gap |

Employee confidence score | Self-reported readiness | Below 60% means ability barriers remain |

Day 90: Stickiness Assessment

This is the most critical checkpoint. Patterns set at 90 days tend to hold. Users who are active at 90 days typically stay active. Users who are not rarely recover without direct intervention.

At 90 days, you want answers to:

Active vs. churned user breakdown by department

Which managers have highest and lowest team adoption rates

Three most common friction points

Whether old-system usage has declined proportionally

Build a simple dashboard showing week-over-week adoption trends from day one. A downward trend at any point in the first 45 days is a critical signal. Organizations that catch it early and respond (with targeted outreach, extra training, or manager escalation) recover fast. Organizations that discover it at 90 days rarely do.

At this point, a pattern should be clear. The most effective way to manage change is not always to push harder on communication, training, or enforcement. It is often to reduce the amount of change required in the first place. This is where system design and change management converge.

How ActionSync Is Built for This Reality

Most enterprise AI platforms are designed to be deployed. ActionSync is designed to be used. The difference matters in the context of everything this guide has covered. Adoption is the hard problem. Deployment is the easy one.

ActionSync connects to the tools your teams already use — Slack, your CRM, your knowledge base, your project management systems — and surfaces intelligence where work is already happening. This design choice directly addresses the adoption barrier McKinsey identified: 45% of employees would use AI tools more if they were integrated into their daily workflows rather than sitting separately.

When an AI assistant lives where your team already works, the change management problem is fundamentally smaller. There is no new application to remember to open. No separate login to maintain. No context switch required. The capability shows up inside familiar interfaces, which means the behavior change you are asking for is much more modest.

For enterprises deploying AI assistants, practical change management looks like this:

Start with the most acute pain. Identify the team where information chaos is most expensive. High urgency creates high motivation, — and your best early-win stories.

Make the first wins visible. When a team member saves 30 minutes in a single workflow, Slack it. Mention it in team meetings. Early, specific wins spread faster than any announcement.

Build champions from the middle. Team leads and senior ICs who other people watch are your most powerful adoption drivers. Get them comfortable first.

Close the feedback loop. Collect it. Act on it. Communicate what changed. That cycle builds the trust that sustains adoption.

FAQs or Frequently Asked Questions

Q: What is the difference between change management and project management?

Project management delivers the technology on time and within budget. Change management ensures the people affected actually adopt it. You can execute a perfect project and still have zero adoption. The two disciplines address different failure modes and require different skills.

Q: What does a complete digital change management plan actually include?

A complete plan covers: change readiness assessment, stakeholder and loss mapping, case for change by audience, communication cadence, role-specific training design, champion network, resistance identification and response, formal feedback loop, and adoption measurement framework. Most organizations build three to five of these. The ones left out are usually where the failure happens.

Q: How do I calculate the ROI of change management?

Model it against adoption rates. A $500,000 annual software investment that achieves 35% adoption delivers a fraction of the value of the same investment at 75% adoption. Change management is the lever that moves you between those numbers. The cost of the program almost always pays for itself in avoided shelfware and productivity recovery alone.

Q: How long should active change management run?

Budget six to twelve months for any initiative touching more than 50 people or significantly altering daily workflows. Major enterprise-wide transformations often require 18 to 24 months. The most common mistake is funding change management through launch and cutting it immediately after — precisely when reinforcement is most critical.

Q: What if senior leadership is not genuinely committed?

This is the hardest scenario and one of the most common. McKinsey identifies leadership misalignment as the top cause of change failure. The most practical approach: quantify the risk in financial terms (wasted license spend, productivity cost, likely re-implementation expense, etc) and escalate explicitly. Change managers who wait for leadership to come around rarely succeed. The conversation needs to happen early and directly.

Q: How is change management different for AI tools specifically?

Three key differences: First, employees carry more trust-related concerns with AI than with traditional software, requiring calibrated trust-building before launch. Second, fear of job displacement is more acute and must be addressed directly, not with vague reassurance. Third, employees are likely already using informal AI tools, so the task is not introduction but redirection.

Conclusion

Digital change management, done well, does not feel like a program. It feels like leadership.

Employees know why the change is happening. They feel prepared for it. They have people they can ask for help. They see their feedback reflected in how the rollout evolves. They watch their managers using the new system, which tells them the change is real. They see their early wins recognized, which tells them the organization notices.

The technology becomes something they choose rather than something that was done to them.

That is the goal. Not just adoption, but ownership. The difference between the two is the difference between a tool people use because they must and a tool people use because it genuinely helps them work.

The organizations winning on this right now are not the ones with the biggest technology budgets. They are the ones who treat the human side of change with the same rigor they apply to the technical side.

The question is simple: when your next initiative launches, which of those organizations will you be?

This is also why the next generation of enterprise tools will not win on features alone. They will win on how little they ask users to change. Enterprise AI copilots like ActionSync represent that shift—from tools that demand adoption to systems that enable it by design.

👉 Ready to see what this looks like in practice? Book a FREE demo of Action Sync and explore new possibilities for your workflows.